You could talk for hours about when one path is more suitable and when the other. In this case too, as almost always in life, when faced with a choice between opposing options, the answer is: it depends!

If the path is to build or maintain local infrastructure, there's little to add and there's plenty of literature online on how to do it and how to do it well. If the chosen path is instead to migrate your infrastructure from a local system (on-premise) to the cloud, one of the first problems that emerges is: "How do I move Terabytes or perhaps Petabytes from my data center to a private server on the network?".

How long would it take with traditional systems?

If you had to transfer 300 Terabytes using an excellent 1 Gbit/s connection, the time required would be: 27 days, 18 hours, and 40 minutes. It's not just a matter of time, but also of data consistency: after almost 28 days the data could be different or no longer relevant. (We're talking about approximately 2 hours and a quarter per Terabyte which for a migration is extremely high).

How we tackle the problem.

At Moku we work with AWS and we've had to tackle this problem, and to do so, we used the family of products designed precisely for this purpose: AWS Snow.

This group of services is designed not only for data migration, but also to allow customers to perform operations in extreme environments, without data centers or in places with unstable network connectivity. Many treat these services only as "hard drives" for transferring data. Even though technically it's not a completely wrong approach, beyond this they're much more.

How the AWS service works.

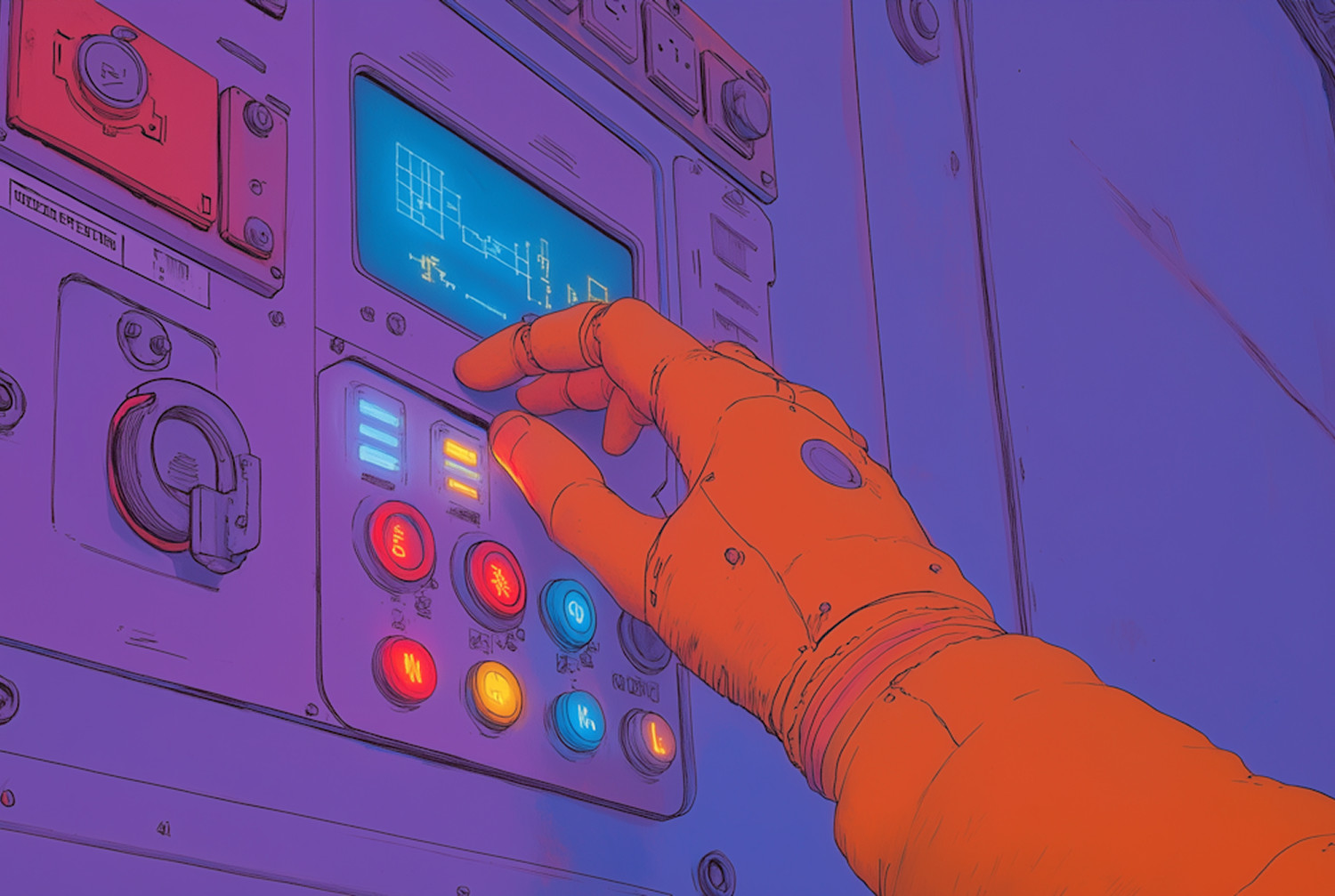

AWS offers physical devices with different capacities, most equipped with integrated computing functionality; these devices allow you to process and transfer data to AWS in offline mode.

These are storage and edge computing devices owned by AWS which guarantees security, monitoring, archiving, management, and processing. The services are divided into 3 categories: AWS Snowcone, AWS Snowball Edge, and AWS Snowmobile.

AWS Snowcone.

This is the smallest among edge computing and data transfer devices in this category. You can use it to collect, process, and transfer data (up to 210TB per device) to AWS either offline by shipping the device, or online using AWS DataSync.

AWS Snowball Edge.

The devices in this category are designed to collect data, process it through machine learning, and store it in environments with unstable connections (for example in the manufacturing, industrial, or transportation sectors) or in extremely remote locations and send everything to AWS. These devices can also be rack-mounted and collected in clusters to create larger temporary installations.

If we're using AWS Snowball Edge it means we probably need to both collect and process data. At this point we need to ask ourselves the question: "Do we primarily need computing capacity or are we more interested in the storage part?" AWS provides two different device types for the two use cases and, even though both operations are technically possible on both devices, it's advisable to choose the one optimized for the predominant type of activity.

Specifically, AWS Snowball Edge devices are divided into:

AWS Snowball Edge storage optimized: ideal for local archiving and large-scale data transfer, equipped with 40 vCPUs of computing capacity coupled with 80 usable terabytes of block storage space.

AWS Snowball Edge compute optimized: 52 vCPUs, 42 terabytes of block or object storage space, and an optional GPU for use cases such as advanced machine learning and full-motion video analysis in disconnected environments.

AWS Snowmobile

Sometimes even this may not be enough because the data is really massive and you need to use heavy transport: a truck! You can request that a semi-truck be shipped to the location where the data resides, where it will be connected to the network and after receiving all the data will head securely to an AWS region. Each truck can transport a "maximum load" of 100 PetaBytes and once it reaches its destination everything will be transferred to S3.

Where should I put my data?

There may be situations where the primary objective is to keep all data on-site. In these cases, having machines in an internal data center can be an excellent solution. It must be said that this requires great skills and resources to manage security, backup, services, risks, maintenance, and infrastructure scalability, but in return it allows an on-site solution with costs that, although paid upfront, are paid only once.

In all other cases, we feel we can recommend a cloud infrastructure that provides these features with a clear SLA written in black and white. These systems are equipped with infrastructure-as-a-service with a pay-per-use model and provide a great advantage: no longer worrying about infrastructure so you can dedicate yourself to business and activities that generate value.